-

Urban public health crises and epidemic emergencies demand rapid and large-scale disinfection operations to ensure environmental safety. Conventional single-agent approaches, such as unmanned aerial vehicles (UAVs), or road-based spraying vehicles are constrained by limited coverage, high energy consumption, and insufficient robustness. In dense and dynamic urban environments, complex road networks, population mobility, and real-time environmental variations further exacerbate operational challenges.

Recent studies have explored UAV-based disinfection, ground vehicle optimization, and multi-agent coordination. For UAVs, Lu et al.[1] developed multi-UAV cooperative planning for dynamic obstacle avoidance, while Vasquez-Gomez et al.[2] optimized spray uniformity and coverage. Dorling et al.[3], and Vanegas et al.[4] integrated energy and terrain constraints, and Agatz et al.[5] proposed heuristic optimization, balancing coverage and energy. Li et al.[6], Wang et al.[7] and Jiang et al.[8] extended multi-objective UAV planning under urban wind fields. Despite progress, these methods remain limited to static or small-scale tasks, and lack adaptability for prolonged, heterogeneous operations[3−9].

Ground vehicle-based research builds on the classical Vehicle Routing Problem (VRP). Toth & Vigo[10], and Bräysy & Gendreau[11] established theoretical frameworks for route optimization with time-window constraints, while Hulagu et al.[12] integrated energy and environmental objectives. Liang et al.[13], and Ighravwe et al.[14] examined dynamic and sustainable routing for urban sanitation. Yet, road-constrained vehicles cannot fully cover off-road or pedestrian areas.

Dual-agent UAV vehicle collaboration models have been proposed[15−20], but most studies remain limited to static coordination, or two-agent coupling. Systematic frameworks integrating aerial, vehicular, and human operators are scarce, and robustness under complex disturbances has not been adequately validated[9,16−21].

Dynamic task scheduling and reinforcement-learning-based optimization have recently emerged[22−25], enabling adaptive coalition formation and load balancing. However, they rarely address heterogeneous, multi-objective trade-offs required for real-world urban disinfection.

This study proposes an intelligent multi-level and multi-type collaborative framework combining UAVs, large spraying vehicles, small electric sprayers, and ground personnel. The system jointly optimizes task allocation, path planning, and dynamic scheduling through multi-objective optimization and reinforcement learning. The key innovations are: (1) A unified optimization model balancing operational efficiency, energy consumption, and robustness under multi-agent constraints. (2) A cooperative path-planning mechanism integrating improved NSGA-II and reinforcement learning for real-time adaptability. (3) A dynamic scheduling and fault-tolerant architecture ensuring continuous operation under environmental or equipment disturbances.

This work contributes a scalable and adaptive decision framework for public-health disinfection and similar urban emergency tasks.

The main contributions of this work can be summarized as follows:

(1) We propose a unified multi-level and multi-type air–ground–human collaborative framework for public health disinfection, which explicitly models heterogeneous agent capabilities, operational constraints, and task characteristics within a single coordinated system.

(2) A hybrid decision-making strategy is developed by coupling offline multi-objective task allocation with online adaptive scheduling, enabling robust coordination under dynamic task demands and environmental disturbances.

(3) Extensive comparative and ablation experiments are conducted under consistent experimental settings to validate the effectiveness, robustness, and practical deplorability of the proposed framework in complex urban scenarios.

Research related to this work mainly involves multi-agent task allocation, path planning and coverage strategies, and heterogeneous collaborative frameworks integrating aerial, ground, and human agents. This section reviews representative studies in these areas, and highlights the limitations that motivate the proposed framework.

(1) Task allocation in multi-agent systems

Task allocation is a fundamental problem in multi-agent systems and has been widely studied in robotics, logistics, and autonomous systems. Classical approaches are typically formulated as combinatorial or multi-objective optimization problems, aiming to minimize total cost, completion time, or resource consumption. Representative methods include auction-based mechanisms, mixed-integer programming, and evolutionary algorithms, which perform effectively under static or mildly dynamic environments.

With the increasing complexity of real-world applications, learning-based approaches, such as reinforcement learning and its variants, have been introduced to enable adaptive task allocation under uncertainty. These methods improve flexibility and scalability, but often require extensive training data, and may suffer from stability issues when facing highly heterogeneous agents or rapidly changing task demands.

Despite these advances, most existing task allocation studies assume homogeneous agents, or only consider limited heterogeneity. The diversity in sensing capability, mobility constraints, and operational roles among UAVs, ground vehicles, and human operators is rarely modeled within a unified allocation framework, which limits the applicability of these methods to complex public health disinfection scenarios.

(2) Path planning and coverage strategies

Path planning and coverage control have been extensively investigated for both aerial and ground robotic platforms. UAV-based coverage planning typically focuses on maximizing area coverage efficiency, while avoiding obstacles and respecting flight constraints. Grid-based decomposition, sampling-based planners, and coverage path planning methods are commonly adopted in aerial disinfection and surveillance tasks.

In contrast, ground vehicle path planning is often constrained by road networks, accessibility, and turning limitations. Graph-based shortest-path algorithms and route optimization methods are widely used to ensure feasibility and efficiency in urban environments. Human-assisted operations introduce additional flexibility, but also uncertainty due to variable execution speeds and subjective decision-making.

While these methods are effective for individual agent types, most existing studies treat aerial, ground, and human agents independently. Directly combining heterogeneous path planning strategies without a unified coordination mechanism often leads to inefficiencies, conflicts, or suboptimal global performance, particularly in time-critical and large-scale disinfection tasks.

(3) Heterogeneous air–ground–human collaborative frameworks

Recently, increasing attention has been given to heterogeneous collaborative systems that integrate multiple agent types. Several studies explore cooperation between UAVs and ground robots to leverage complementary advantages, such as aerial perception and ground-level execution. Other works incorporate human participation to enhance adaptability in complex or uncertain environments.

However, most existing heterogeneous collaboration frameworks focus on specific agent combinations or isolated subsystems, such as perception sharing or communication protocols. Comprehensive frameworks that simultaneously address task allocation, path planning, and adaptive scheduling across aerial, ground, and human agents remain limited. Moreover, robustness against dynamic task changes, agent failures, and environmental disturbances is often insufficiently considered.

In contrast to existing approaches, this work emphasizes a system-level collaborative framework that unifies heterogeneous agents through hybrid offline multi-objective optimization and online adaptive scheduling. By explicitly modeling agent diversity and integrating predictive coordination mechanisms, the proposed framework aims to bridge the gap between theoretical multi-agent methods and practical deployment requirements in public health disinfection operations.

Unlike studies that focus on improving a single task allocation or path planning algorithm, this work aims to provide a system-level collaborative framework that integrates heterogeneous agents, multiple optimization paradigms, and adaptive scheduling mechanisms to support real-world public health disinfection operations.

-

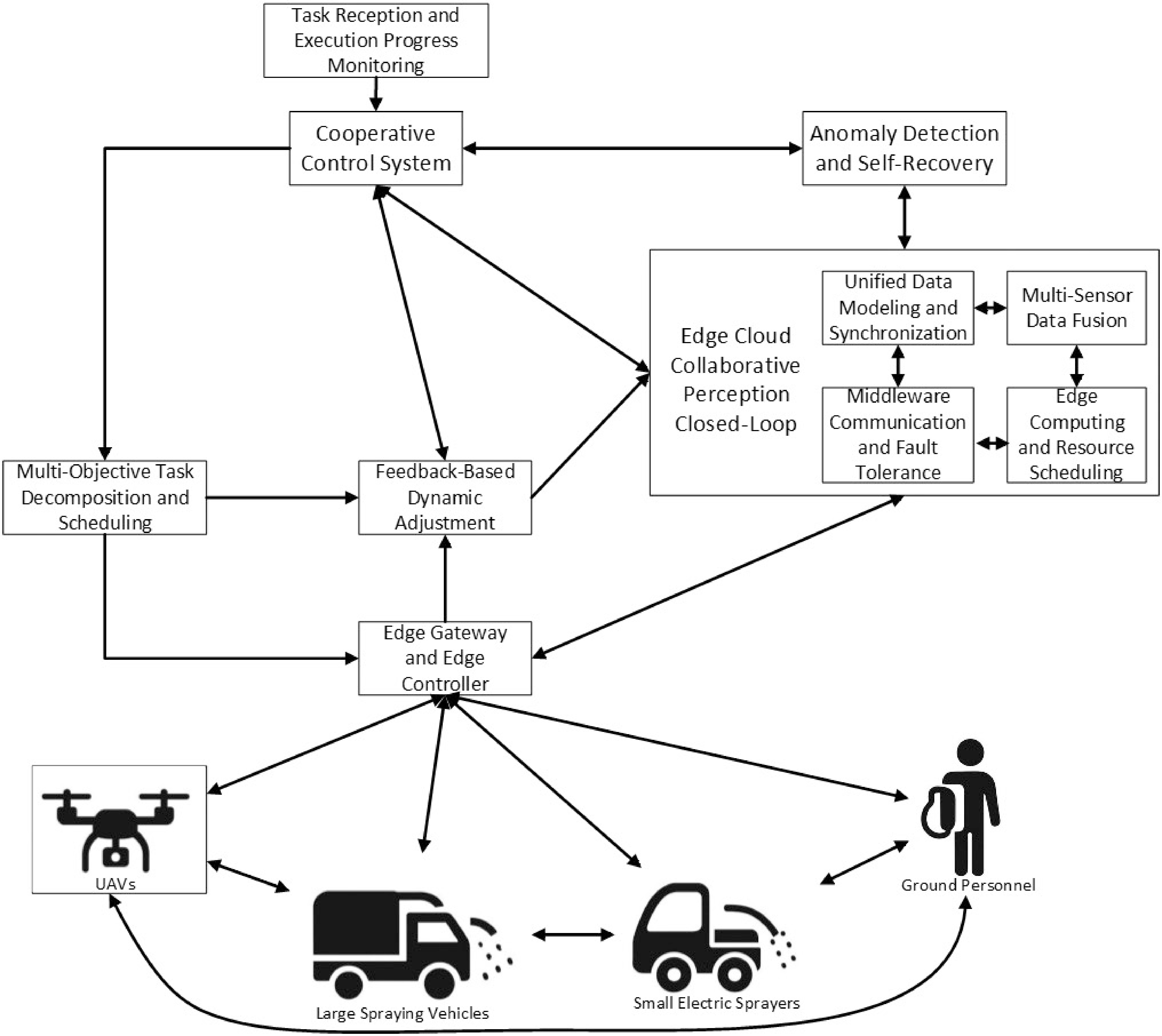

The proposed system consists of heterogeneous agents: UAVs for aerial coverage, large spraying vehicles for major roads, small electric vehicles for narrow streets, and ground personnel for fine-grained operations. Agents communicate with a central scheduling platform through 4G/5G or IoT protocols. Edge-computing nodes perform local optimization when connectivity degrades, ensuring continuous control.

The architecture forms a closed loop of perception → decision → execution → feedback, enabling real-time monitoring, adaptive scheduling, and anomaly recovery.

Figure 1 systematically illustrates the collaborative control framework of UAVs, large spraying vehicles, small electric spraying vehicles, and ground personnel in public disinfection tasks. The overall system architecture is organized around a closed-loop mechanism of 'perception → decision → execution → feedback', encompassing multiple functional modules and hierarchical support platforms.

Task scenario and metrics

-

For effective disinfection task planning, it is necessary to partition the task area and define corresponding evaluation metrics. Let the disinfection task area be denoted as

$ A $ $ n $ $ A=\left\{{A}_{1},{A}_{2},\ldots ,{A}_{n}\right\} $ $ T=\left\{{T}_{1},{T}_{2},\ldots ,{T}_{m}\right\} $ $ {T}_{i}\subseteq A $ $ i $ (1) Coverage rate (CR)

CR measures the proportion of the task area completed by the operational platforms. It is calculated as:

$ WCR=\dfrac{\displaystyle\sum\nolimits_{i=1}^{M}{w}_{i}\cdot {\delta }_{i}\cdot \left| {T}_{i}\right| }{\displaystyle\sum\nolimits_{i=1}^{M}{w}_{i}\cdot \left| {T}_{i}\right| } $ (1) where,

$ \left| {T}_{i}\right| $ $ i $ $ {T}_{i} $ $ {\delta }_{i} $ $ {w}_{i} $ $ {\delta }_{i}=1 $ $ {\delta }_{i}=0 $ (2) Energy consumption (EC)

EC measures the total energy expended by all platforms during task execution:

$ EC=\sum\limits_{k=1}^{K}\sum\limits_{j=1}^{{n}_{k}}{e}_{k,j} $ (2) where, K is the total number of platforms (including UAVs, vehicles, and ground personnel),

$ {n}_{k} $ $ k $ (3) Working time (WT)

WT measures the maximum time required to complete all tasks:

$ WT=\underset{k=1,2,\ldots ,K}{\max }\sum\limits_{j=1}^{{n}_{k}}{t}_{k,j} $ (3) where, tk,j is the WT of platform k for task j. This metric evaluates the overall efficiency of the task schedule.

Additionally, working efficiency (WE) is introduced to assess the utilization of time and resources during task execution, reflecting the effectiveness of the scheduling scheme. It is defined as the ratio of total task coverage to total WT:

$ WE=\dfrac{\displaystyle\sum\nolimits_{k=1}^{M}{w}_{i}\cdot {\delta }_{i}}{\displaystyle\sum\nolimits_{k=1}^{K}\displaystyle\sum\nolimits_{i=1}^{M}{t}_{k,i}{x}_{k,i}} $ (4) where, tk,i is the time required for platform k to execute task Ti, and xk,i is the task assignment variable, with xk,i if platform k is assigned to subregion Ti, and 0 otherwise.

The WT is defined as the maximum execution time among all agents rather than the total time. This definition reflects the system-level makespan of collaborative disinfection tasks, as the overall mission is only completed when the last agent finishes its assigned workload. Therefore, WT is more suitable for evaluating coordination efficiency and bottleneck performance in heterogeneous, multi-agent systems.

(4) Redundancy (RD)

RD reflects the degree to which task areas are covered multiple times:

$ RD=\dfrac{\displaystyle\sum\nolimits_{i=1}^{m}(\displaystyle\sum\nolimits_{k=1}^{K}{1}_{k,i}-1)\cdot \left| {T}_{i}\right| }{\displaystyle\sum\nolimits_{i=1}^{M}\left| {T}_{i}\right| } $ (5) where, 1k,i is an indicator variable, with 1k,i if platform k covers task Ti and 0 otherwise.

(5) Robustness (RB)

RB measures the system's ability to maintain task coverage under partial platform failures or anomalies:

$ RB=\dfrac{\displaystyle\sum\nolimits_{i=1}^{M}\delta _{i}^{fail}\cdot \left| {T}_{i}\right| }{\displaystyle\sum\nolimits_{i=1}^{M}\left| {T}_{i}\right| } $ (6) where,

$ \delta _{i}^{fail}=1 $ $ \delta _{i}^{fail}=0 $ (6) Task balance (TB)

TB evaluates the equity of task allocation among different platforms:

$ TB=1-\dfrac{\sqrt{\dfrac{1}{K}\displaystyle\sum\nolimits_{k=1}^{K}{({{n}_{k}}-\tilde{n})}^{2}}}{\tilde{n}} $ (7) where, nk is the number of tasks assigned to platform k, and

$ \tilde{n}=\dfrac{1}{K}\displaystyle\sum\nolimits_{k=1}^{K}{n}_{k} $ (7) Coverage continuity (CC)

CC measures the continuity of task coverage, avoiding gaps or missed areas:

$ CC=\dfrac{\displaystyle\sum\nolimits_{i=1}^{M}gaps({T}_{i})}{\displaystyle\sum\nolimits_{i=1}^{M}\left| {T}_{i}\right| } $ (8) where, gaps(Ti) represents the area of gaps not continuously covered within task region Ti.

(8) Fault tolerance rate (FTR)

FTR reflects the system's ability to complete tasks even when some platforms fail:

$ FTR=\dfrac{\displaystyle\sum\nolimits_{i=1}^{M}\left| {T}_{i}\right| \cdot 1_{{T}_{i}}^{res}}{\displaystyle\sum\nolimits_{i=1}^{M}\left| {T}_{i}\right| } $ (9) where,

$ 1_{{T}_{i}}^{res}=1 $ $ 1_{{T}_{i}}^{res}=0 $ (9) Conflict rate (CF)

It is defined as the ratio of actual conflict events to the total potential conflicts:

$ CF=\dfrac{\displaystyle\sum\nolimits_{k=1}^{K}\displaystyle\sum\nolimits_{l=1,l\neq k}^{M}\displaystyle\sum\nolimits_{t=0}^{WT}\Pi (\left|\left|{P}_{k}(t)-{P}_{l}(t)\right|\right|\leqslant {d}_{\min })}{K\cdot (K-1)\cdot WT} $ (10) where, Pk(t) and

$ {P}_{l}(t) $ $ l $ $ \Pi (\cdot ) $ Optimization objectives and constraints

-

The primary optimization objectives are Weighted Coverage Rate (WCR), WT/WE, EC, and RB. Other metrics, such as RD, TB, CC, CF, and FTR, serve as constraints, evaluation metrics, or auxiliary references—they are not directly maximized or minimized, but are analyzed and adjusted post-optimization. The core objectives of the multi-objective optimization in this study are:

Maximization of critical area coverage

$ \max {F}_{1}=WCR= $ $ \dfrac{\displaystyle\sum\nolimits_{i=1}^{M}{w}_{i}\cdot {\delta }_{i}\cdot \left| {T}_{i}\right| }{\displaystyle\sum\nolimits_{i=1}^{M}{w}_{i}\cdot \left| {T}_{i}\right| } $ Minimization of WT

$ \min {F}_{2}=WT=\underset{k=1,2,\ldots ,K}{\max }\displaystyle\sum\limits_{j=1}^{{n}_{k}}{t}_{k,j} $ Minimization of EC

$ \min {F}_{3}=EC=\displaystyle\sum\limits_{k=1}^{K}\displaystyle\sum\limits_{j=1}^{{n}_{k}}{e}_{k,j} $ Maximization of system RB

$ \max {F}_{4}=RB= $ $\dfrac{\displaystyle\sum\nolimits_{i=1}^{M}\delta _{i}^{fail}\cdot \left| {T}_{i}\right| }{\displaystyle\sum\nolimits_{i=1}^{M}\left| {T}_{i}\right| } $ (1) Energy constraint

Each platform's total EC during task execution must not exceed its available energy limit

$ E_{k}^{\max } $ $ \displaystyle\sum\limits_{j=1}^{{n}_{k}}{e}_{k,j}\leq E_{k}^{\max },k=1,2,\ldots ,K $ (2) Path conflict constraint

The operation paths of different platforms must not overlap or conflict at the same time. Let

$ {P}_{k}(t) $ $ {P}_{l}(t) $ $ k $ $ l $ $ t $ $ \left|\left|{P}_{k}(t)-{P}_{l}(t)\right|\right|\geqslant {d}_{\min },\forall k\neq l,t\in \left[0,WT\right] $ $ {d}_{\min } $ (3) Safety distance constraint

For UAVs, vehicles, and ground personnel, all platforms must maintain a minimum safety distance

$ {d}_{safe} $ $ \left|\left|{P}_{k}(t)-{P}_{l}(t)\right|\right|\geq {d}_{safe},\forall k\neq l $ (4) Crowd density constraint

In high-density areas

$ {R}_{h}\subset A $ $ {n}_{k}({R}_{h})\leqslant N_{h}^{\max },{v}_{k}({R}_{h})\leqslant V_{h}^{\max } $ $ {n}_{k}({R}_{h}) $ $ {v}_{k}({R}_{h}) $ $ N_{h}^{\max } $ $ V_{h}^{\max } $ (5) Coverage continuity constraint

Tasks within a region must be continuously covered without significant gaps. Let

$ gaps({T}_{i}) $ $ {T}_{i} $ $ gaps({T}_{i})\leqslant \varepsilon \cdot \left| {T}_{i}\right| ,i=1,2,\ldots ,M $ $ \varepsilon $ Intelligent task allocation and scheduling strategy

Multi-objective task allocation formulation

-

To achieve efficient coordination among heterogeneous agents, the task allocation problem is formulated as a constrained multi-objective optimization problem. The task allocation aims to simultaneously optimize multiple system-level objectives, which can be expressed as:

$ \min F=\left\{{f}_{1}(X),{f}_{2}(X),\ldots ,{f}_{K}(X)\right\} $ (11) where, X denotes the task–agent assignment matrix. Specifically, the considered objectives include: task completion efficiency, which minimizes the overall WT, defined as the maximum execution time among all agents; operational cost, accounting for EC and traversal distance; workload balance, which reduces excessive task concentration on individual agents.

Each objective function is normalized to ensure comparable scales during optimization.

The optimization is subject to the following constraints:

$ \begin{aligned}&C1\colon \sum\limits_{i=1}^{N}{x}_{ij}=1,\;\forall {t}_{j}\in T,\\ & C2\colon {x}_{ij}\in \left\{0,1\right\},\;\forall {a}_{i}\in A,\;\forall {t}_{j}\in T,\\ &C3\colon {x}_{ij}=0,\;\text{if}\;{a}_{i}\;\text{is\;incompatible\;with}\;{{t}}_{j},\\ & C4\colon {T}_{i}(X)\leqslant {T}_{i}{}^{\max },\;\forall {a}_{i}\in A \end{aligned} $ (12) where, Constraint C1 ensures that each task is assigned to exactly one agent, Constraint C2 defines the binary nature of the decision variables, Constraint C3 enforces agent–task compatibility, and Constraint C4 limits the maximum allowable WT of each agent.

The binary decision variable

$ {x}_{ij} $ $ {x}_{ij}=\begin{cases} 1,\;\text{if\;task}\;{t}_{j}\;\text{is\;assigned\;to\;agent}\;{a}_{i}\\ 0,\;otherwise \end{cases} $ (13) The WT

$ {T}_{i}(X) $ $ {a}_{i} $ Given the discrete and multi-objective nature of the problem, an improved NSGA-II algorithm is adopted to obtain a set of Pareto-optimal task allocation solutions. The specific improvements and parameter settings are described in the following subsection.

The proposed NSGA-II-based task allocation does not aim to redesign the classical evolutionary framework. Instead, several application-oriented adaptations are introduced to improve its effectiveness for heterogeneous public health disinfection tasks.

First, a task-oriented chromosome encoding is adopted to explicitly represent heterogeneous agent capabilities and operational constraints, enabling feasible assignment across aerial, ground, and human agents. Second, constraint-handling mechanisms are incorporated to penalize infeasible solutions that violate task accessibility or safety requirements. Third, the offline optimization results are designed to be compatible with the subsequent online adaptive scheduling module, ensuring smooth coordination between global planning and real-time execution.

Cooperative path planning for heterogeneous agents

-

For UAVs, the task areas are typically high-priority or difficult-to-reach subregions. Considering flight altitude, range, and obstacle avoidance constraints, this study adopts a path planning approach based on a heuristic A* algorithm combined with an improved Rapidly-exploring Random Tree (RRT). The path planning objective function integrates minimizing flight distance, maximizing coverage, and ensuring obstacle avoidance safety:

$ \min {J}_{U}=\sum\limits_{(p,q)\in {P}_{U}}{d}_{p,q}+{\lambda }_{c}(1-{C}_{coverage})+{\lambda }_{o}{O}_{risk} $ (14) where,

$ {P}_{U} $ $ {d}_{p,q} $ $ {C}_{coverage} $ $ {O}_{risk} $ $ {\lambda }_{c} $ $ {\lambda }_{o} $ Large spraying vehicles (

$ {V}_{L} $ $ {V}_{S} $ $ \min {J}_{V}=\sum\limits_{i,j\in {P}_{V}}{d}_{i,j}+{\lambda }_{t}\sum\limits_{i\in {P}_{V}}{t}_{i} $ (15) where, PV denotes the set of vehicle path nodes,

$ {d}_{i,j} $ $ {t}_{i} $ $ i $ $ {\lambda }_{t} $ Ground personnel (H) primarily operate in complex or hazardous areas. Considering accessibility, task distribution, and safety, path planning is conducted using a grid-map-based graph search combined with shortest-path algorithms. The objective is to minimize walking distance and task completion time while ensuring safety constraints:

$ \min {J}_{H}=\sum\limits_{(m,n)\in {P}_{H}}{d}_{m,n}+{\lambda }_{s}{S}_{risk} $ (16) where, PH denotes the set of path nodes for personnel,

$ {d}_{m,n} $ $ {S}_{risk} $ $ {\lambda }_{s} $ Under collision-avoidance constraints, local optimization algorithms—combining heuristic search with gradient-based adjustment—are employed to optimize paths, balancing operation time, EC, and coverage. The path optimization objective function can be expressed as:

$ \min {J}_{CO}=\sum\limits_{k\in K}\left(\sum\limits_{(i,j)\in {P}_{k}}{d}_{i,j}+{\lambda }_{t}\sum\limits_{i\in {P}_{k}}{t}_{k,i}-{\lambda }_{c}{C}_{k}\right) $ (17) where, K represents the set of all platforms, Pk denotes the set of path nodes for platform

$ k $ $ {d}_{i,j} $ $ {t}_{k,i} $ $ {C}_{k} $ $ {\lambda }_{t} $ $ {\lambda }_{c} $ The weight parameters are introduced to balance multiple optimization objectives with different physical meanings and scales. These weights reflect the relative importance of coverage effectiveness, operational cost, and execution time in public health disinfection tasks. Their values are determined based on task priority and empirical operational experience, rather than through automatic parameter optimization. To ensure fair comparison and reproducibility, a unified set of weight parameters is adopted for all experiments, including comparative evaluations and ablation studies.

It should be noted that the proposed path planning module focuses on high-level task-oriented, and coverage planning, where paths are represented as sequences of waypoints or coverage regions. The dynamic and kinematic feasibility of the generated paths is ensured at the execution level by integrating standard motion planning and control modules, such as trajectory smoothing or model predictive control, depending on the specific agent type. This hierarchical design allows the framework to remain general and applicable to heterogeneous aerial, ground, and human agents with different motion constraints.

Dynamic scheduling and robustness enhancement

-

The cooperative scheduling network is designed as a hierarchical and closed-loop decision-making mechanism, rather than a single-stage planner. Its primary objective is to maintain robust coordination among heterogeneous agents under dynamic task demands and environmental disturbances.

At the offline planning stage, the multi-objective task allocation and path planning module generates an initial coordination scheme based on nominal task information and agent capabilities. This stage provides a globally consistent baseline solution that serves as the reference plan for execution.

During task execution, the system enters the online adaptive scheduling stage, where real-time feedback from agents, including task progress, execution delays, and local disturbances, is continuously monitored. When deviations from the reference plan exceed predefined thresholds, the scheduling network triggers localized adjustments instead of full re-planning, thereby reducing computational overhead, and improving responsiveness.

In addition, predictive coordination modules are incorporated to estimate short-term task evolution and agent availability, enabling proactive scheduling decisions. This predictive capability allows the system to mitigate potential bottlenecks before they propagate through the collaborative process.

Through the interaction of offline optimization, online adaptation, and predictive feedback, the cooperative scheduling network forms a closed-loop coordination mechanism that enhances robustness and logical coherence while avoiding unnecessary system complexity.

The dynamic scheduling module is triggered when any of the following conditions are detected:

(1) Platform task delay exceeds the threshold:

$ \Delta T \gt {\delta }_{T} $ (2) Platform EC is abnormal:

$ \left| {E}_{k}-\overline{E}\right| \gt {\delta }_{E} $ (3) Task completion rate falls below the expected value:

$ {\rho }_{k} \lt {\rho }_{\min } $ (4) A new task

$ {T}_{new} $ Once triggered, the system constructs a new optimization objective function:

$ \min {J}_{dyn}={\alpha }_{1}\sum\limits_{i\in T}\Delta {T}_{i}+{\alpha }_{2}{\sum\limits_{k\in K}\left({L}_{k}(t)-\overline{L}(t)\right)}^{2}+{\alpha }_{3}{C}_{ik} $ where,

$ {L}_{k}(t) $ $ k $ $ \overline{L}(t) $ $ {C}_{ik} $ $ T $ $ k $ $ {\alpha }_{1} $ $ {\alpha }_{2} $ $ {\alpha }_{3} $ -

The experimental evaluation is designed to validate the effectiveness of the proposed framework from three complementary perspectives. First, comparative experiments evaluate the overall performance of the proposed collaborative framework against representative baseline methods. Second, robustness-related experiments analyze the framework's adaptability under dynamic task changes and agent disturbances. Third, ablation studies systematically examine the contribution of individual system components. Together, these experiments aim to provide a comprehensive assessment of the proposed system-level design.

Experimental setup and scenario configuration

-

The experimental platforms consist of heterogeneous operational units, including six Unmanned Aerial Vehicles (UAVs), two large spraying vehicles, eight small electric spraying vehicles, and 30 ground personnel (excluding drivers and operators of the vehicles). Different platforms undertake differentiated tasks based on their operational characteristics, payload capacities, and functional capabilities: UAVs perform aerial monitoring, localized spraying, and environmental recognition; large spraying vehicles handle main roads and large open areas; small electric spraying vehicles operate in narrow streets, complex terrains, and boundary areas, and ground personnel conduct auxiliary inspection, supplemental operations, and equipment maintenance.

The experiments are conducted in a simulated urban environment, constructed based on representative public health disinfection scenarios. The urban area covers approximately several square kilometers and includes typical road networks, open spaces, and building-dense regions. Disinfection tasks are distributed across the area with varying spatial densities to reflect realistic operational conditions.

The agent set consists of heterogeneous platforms, including unmanned aerial vehicles (UAVs), ground vehicles, and human operators. Representative operational parameters, such as nominal speed ranges, task capacity, and accessibility constraints, are assigned according to common specifications reported in related studies. These parameters remain unchanged across all experiments, including comparative evaluations and ablation studies, to ensure fairness and reproducibility.

Task allocation is determined based on task density, road accessibility, and environmental complexity. The system first constructs a grid-based model in a Geographic Information System (GIS) environment, and generates an initial task set considering terrain features, obstacle distribution, and task priorities. Tasks are then assigned to each platform according to its operational capability, payload capacity, and time window constraints, ensuring workload balance and overall operational efficiency.

For algorithmic parameter settings, the task allocation module employs a hybrid multi-objective optimization, combining the improved NSGA-II and reinforcement learning. The optimization objectives include maximizing operational efficiency, minimizing EC, and balancing system load. To balance search accuracy and computational cost, the population size is set to 100, to ensure solution diversity while controlling computation; the maximum number of iterations is 200, verified through multiple simulations to provide a favorable trade-off between convergence speed and solution quality; the crossover probability is 0.8 to enhance global search capability; the mutation probability is 0.2 to introduce local perturbations, preventing convergence to local optima.

The reinforcement learning component uses a Deep Q-Network (DQN) structure to achieve adaptive updates of the scheduling policy. The learning rate is set to 0.001 to control parameter updates and ensure stable convergence; the discount factor γ is set to 0.9 to balance short-term task rewards and long-term system performance. These parameters were determined through pre-experiments and sensitivity analysis, demonstrating effective improvement in task scheduling convergence, stability, and robustness.

To ensure a fair and meaningful evaluation, baseline methods are selected according to the operational characteristics and typical application scenarios of different agent types, rather than enforcing a unified planning algorithm for all agents. This design reflects practical deployment considerations in heterogeneous disinfection systems.

For aerial agents (UAVs), baseline path planning methods emphasize coverage efficiency and obstacle avoidance in open urban spaces. Classical grid-based and sampling-based planners are adopted, as they are widely used in UAV coverage and surveillance tasks.

For ground vehicles, baselines focus on road-constrained navigation and coverage limitations caused by urban infrastructure. Graph-based planning methods are employed, which are commonly applied in ground vehicle routing and sanitation operations.

For human operators, task allocation baselines are defined using rule-based assignment strategies that reflect manual operation constraints and safety considerations, which are consistent with practical disinfection workflows.

To validate the effectiveness of the proposed dynamic multi-objective task scheduling algorithm combining improved NSGA-II and reinforcement learning (MO-NSGA-II + RL), several comparative experiments were designed. The following baseline methods were selected for performance reference:

(1) Baseline A (Static NSGA-II): traditional NSGA-II is used for static task allocation without online adaptive adjustment, performing only offline multi-objective optimization.

(2) Baseline B (MOEA/D): multi-objective evolutionary algorithm based on decomposition is used to solve the task allocation problem, achieving balanced multi-objective optimization, but without reinforcement learning for online adaptation.

(3) Baseline C (Pure RL, DDPG): pure reinforcement learning (Deep Deterministic Policy Gradient, DDPG) is used for task allocation, emphasizing online adaptivity, but lacking global search and Pareto multi-objective balancing mechanisms.

(4) Proposed Method (MO-NSGA-II + RL): combines the global multi-objective search capability of the improved NSGA-II with the online adaptive optimization ability of reinforcement learning, achieving comprehensive optimization of task coverage, operational efficiency, EC, and system robustness.

The parameter settings for NSGA-II follow commonly adopted configurations in the literature, with minor adjustments to ensure stable convergence under heterogeneous task allocation scenarios. Population size and iteration numbers are selected to balance computational efficiency and solution quality, while crossover and mutation probabilities are kept consistent with classical NSGA-II recommendations. These settings are applied uniformly across all comparative and ablation experiments to ensure fairness and reproducibility, rather than to claim inherent superiority over the classical NSGA-II.

Evaluation metrics and benchmark methods

-

This set of experiments primarily validates the overall effectiveness of the proposed framework by comparing it with baseline methods under identical experimental settings, highlighting the benefits of heterogeneous collaboration and hybrid coordination.

In this study, nine key performance metrics are considered for evaluating the proposed multi-platform collaborative system: CR, WT/WE, EC, System SB, Scheduling Stability (SS), TB, CC, FTR and CR. These metrics have been formally defined in the section on task scenario modeling.

Experimental results and performance analysis

-

These experiments focus on evaluating the robustness of the proposed framework in dynamic environments, demonstrating its ability to adapt to task variations and agent-level disturbances through online scheduling and predictive coordination.

Multi-objective task allocation performance

-

This section validates the performance of the proposed dynamic multi-objective scheduling algorithm (MO-NSGA-II+RL) in complex urban scenarios, focusing on multi-agent collaboration, task allocation, and system robustness. Experiments evaluate performance along two dimensions:

(1) Task execution entity: single-agent (UAV, large or small spraying vehicles, or ground personnel) vs multi-agent collaboration, where multiple platforms coordinate to improve efficiency and coverage.

(2) Task allocation strategy: static allocation assigns tasks once at the start, while dynamic allocation continuously updates schedules based on real-time task states and environmental changes, enhancing resource utilization and robustness.

Simulation results based on historical data involve ten independent runs per scenario, with averages recorded. Table 1 summarizes the performance metrics across different entity and allocation combinations.

Table 1. Comparison of multi-objective task allocation performance under multi-agent and dynamic assignment strategies.

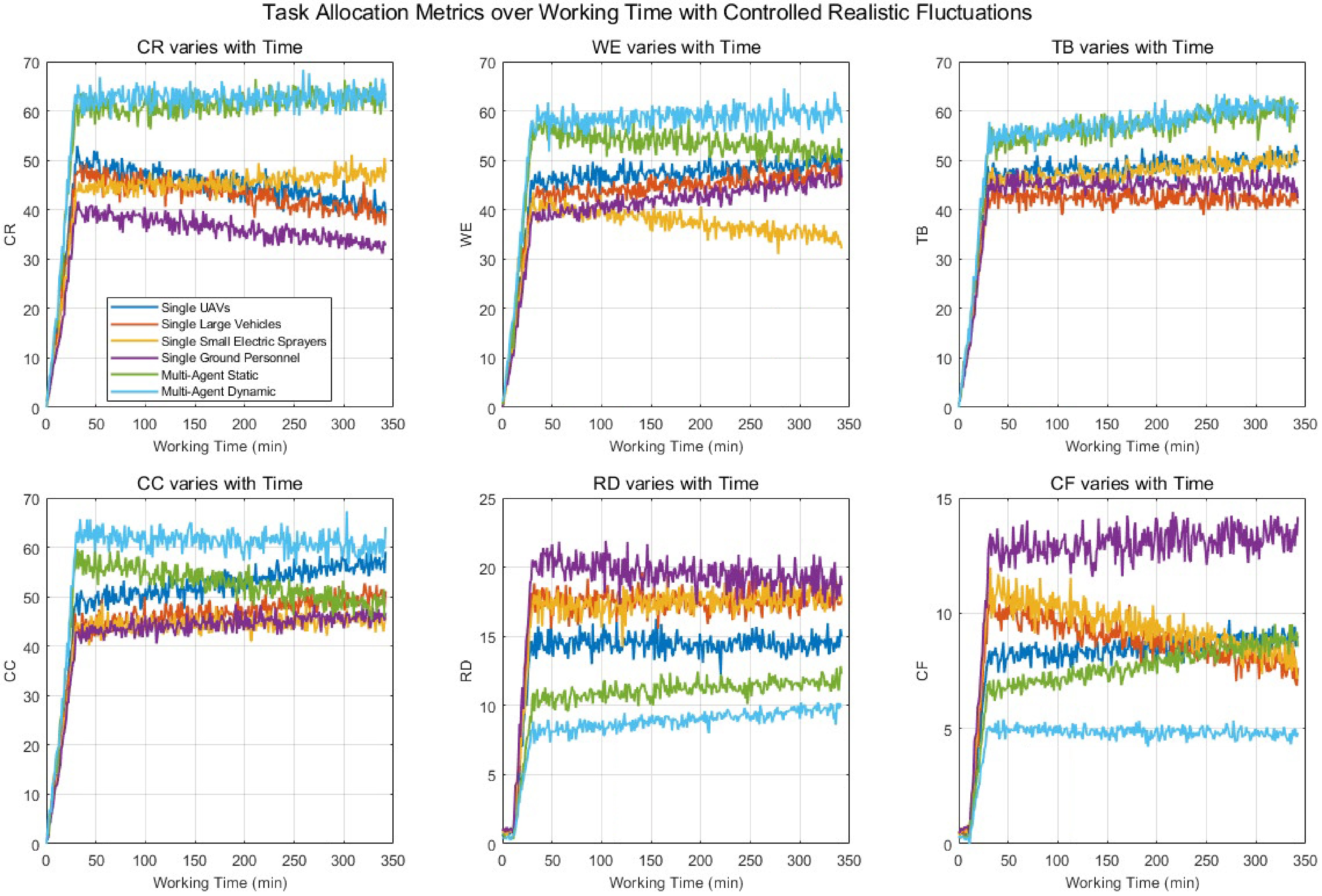

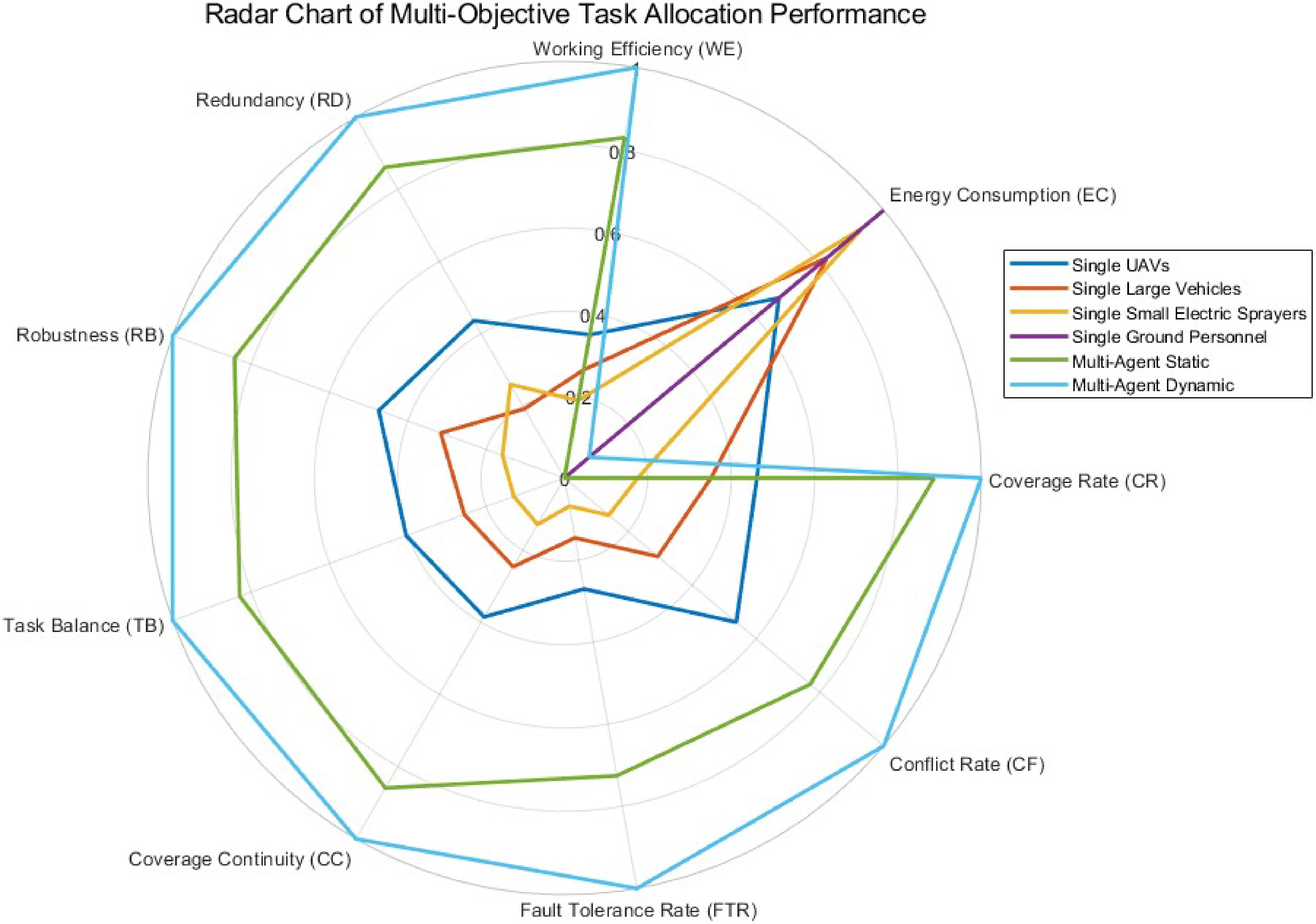

Assignment strategy CR (%) WE (%) EC (MJ) RD (%) RB TB (%) CC (%) FTR (%) CF (%) Single agent UAV 72.45 65.12 120.34 15.22 0.81 67.5 70.12 62.34 8.45 Single agent large spraying vehicle 68.34 62.45 115.78 18.15 0.78 64.81 66.5 59.12 10.22 Single agent small electric sprayers 61.78 60.12 112.45 17.34 0.75 62.50 63.45 57.12 11.33 Single agent ground personnel 55.21 54.23 110.45 20.45 0.72 60.15 60.11 55.34 12.33 Multi-agent collaboration (static) 88.34 80.12 140.56 10.12 0.88 75.23 82.45 74.12 6.78 Multi-agent collaboration (dynamic) 92.56 85.45 138.23 8.45 0.91 78.34 86.12 81.23 5.12 To further analyze the effectiveness of the proposed method, Fig. 2 presents comparison curves for six key metrics—CR, WE, RD, TB, CC, and CF—between a single UAV, and the proposed method.

To more realistically illustrate the dynamic changes of multi-objective task allocation under different agent combinations and allocation strategies, this study generated evolution curves over operation time for six key metrics: CR, WE, TB, CC, RD, and CF, using a stripe-curve method.

The curve generation fully accounts for the phased characteristics of real operations. Initial phase (0–10 min): metrics rise slowly with noticeable fluctuations, reflecting the instability during system startup and preliminary deployment. Rapid growth phase (10–30 min): CR, WE, TB, and CC increase rapidly, while RD and CF peak and then start to decline, capturing concentrated task conflicts and redundant operations. Stabilization phase (after 30 min): curves gradually stabilize, with minor fluctuations and occasional crossovers, representing temporary variations caused by unexpected events, or resource adjustments in actual operations.

The generated curves not only align with the experimental averages, but also vividly demonstrate the practical effects of dynamic optimization scheduling and multi-agent collaboration. Notably, the curves for dynamic multi-agent allocation are generally higher than those of single-agent or static allocation schemes, while RD and CF progressively decrease, further validating the significant advantages of the proposed dynamic scheduling algorithm (improved NSGA-II combined with reinforcement learning) in terms of coverage, efficiency, task balance, and conflict suppression.

Figure 3 presents a radar chart illustrating the comprehensive performance of the proposed method in multi-agent task allocation, covering nine key evaluation metrics. Different curves correspond to single-agent execution (UAV, large spraying vehicle, small electric spraying vehicle, and ground personnel), multi-agent static allocation, and multi-agent dynamic optimization allocation, providing an intuitive comparison of each strategy across multiple objectives.

Path planning and collaborative operation performance

-

This section aims to evaluate the performance of different path planning algorithms and multi-platform collaborative operations in complex urban environments, focusing on task efficiency, coverage accuracy, and EC, thereby validating the effectiveness and feasibility of the proposed multi-objective optimization and collaboration strategies in path planning. The experiments involve three types of operational agents: UAVs, ground vehicles (large spraying vehicles and small electric spraying vehicles), and ground personnel, while also examining the impact of multi-agent collaboration on CFs and CC.

In the experimental design, multiple path planning algorithms are applied to each type of agent: UAVs utilize improved A*, RRT*, genetic algorithms, and deep reinforcement learning (DRL); ground vehicles employ Dijkstra's algorithm, reinforcement learning, and genetic algorithms; ground personnel adopt graph-based methods and grid search strategies. For multi-agent collaboration experiments, the path planning results of UAVs, ground vehicles, and personnel are integrated, combined with task scheduling and conflict management strategies, enabling cross-platform information sharing and dynamic cooperation.

To comprehensively assess the performance of different path planning algorithms and multi-agent collaborations in complex urban environments, this study systematically analyzed the path planning outcomes of UAVs, ground vehicles (large and small), and ground personnel. Key performance metrics recorded include path length, task completion time, EC, CR, task CR, CC, and TB, with results averaged over 10 independent trials. Table 2 presents a comparison of single-agent path planning algorithms and multi-agent collaborative strategies across these metrics, providing an intuitive basis for evaluating the effectiveness of different path planning methods and collaboration strategies.

Table 2. Comparison of path planning and collaborative operation performance under different algorithms and multi-agent strategies.

Assignment strategy/algorithm CR (%) EC (MJ) WE (%) RD (%) RB TB (%) CC (%) FTR (%) CF (%) Path length (m) UAV - A* 74.12 125.34 66.78 14.56 0.82 68.45 71.12 63.34 8.78 1,450 UAV - RRT* 75.34 122.56 68.12 13.89 0.84 69.78 72.45 64.12 8.12 1,423 UAV - GA 72.45 120.78 65.12 15.22 0.81 67.5 70.12 62.34 8.45 1,478 UAV - DRL 78.23 118.34 70.45 12.78 0.85 71.23 73.45 65.12 7.89 1,402 Large spraying vehicle - Dijkstra 69.12 115.78 63.45 17.34 0.78 65.12 66.78 59.45 10.22 1,600 Large spraying vehicle - RL 70.45 113.45 64.78 16.89 0.8 66.45 67.23 60.12 9.78 1,575 Small electric sprayers - GA 61.78 112.45 60.12 17.34 0.75 62.5 63.45 57.12 11.33 1,520 Ground personnel - Graph Theory 55.21 110.45 54.23 20.45 0.72 60.15 60.1 55.34 12.33 1,680 Multi-agent static 88.34 140.56 80.12 10.12 0.88 75.23 82.45 74.12 6.78 1,300 Multi-agent dynamic 92.56 138.23 85.45 8.45 0.91 78.34 86.12 81.23 5.12 1,285 The experimental results indicate significant differences in the performance of various path planning algorithms in complex urban environments. For single-agent operations, the improved A* and DRL algorithms demonstrated higher coverage efficiency and faster task execution for UAV path planning, while genetic algorithms offered certain advantages in energy consumption optimization. For ground vehicles, both reinforcement learning and Dijkstra's algorithm provided stable performance in terms of path length and task completion time. Ground personnel path planning showed good coverage continuity, but relatively lower efficiency.

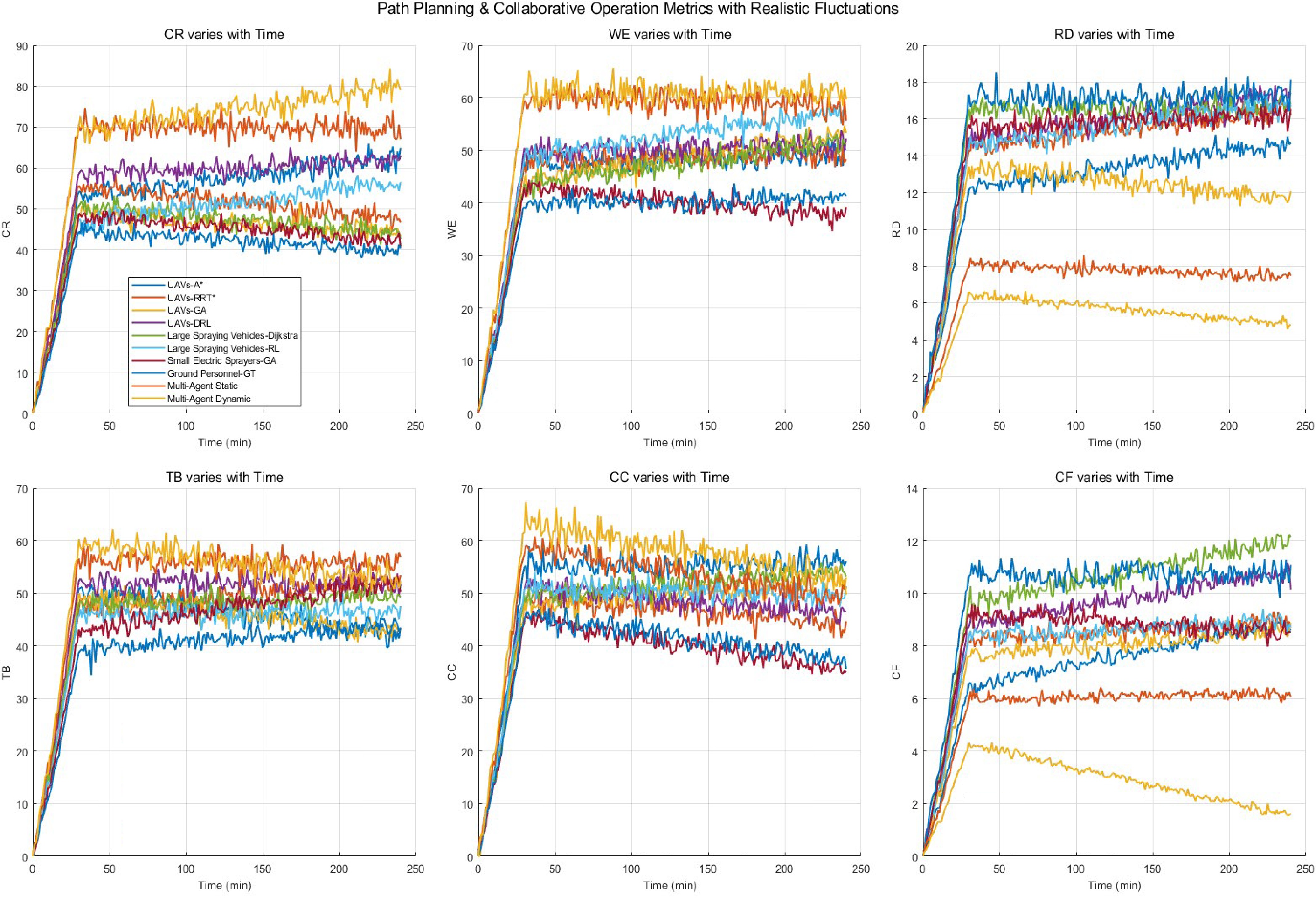

To visually present the effects of different path planning algorithms and multi-platform collaboration, line charts were generated showing the dynamic evolution of six key indicators over task execution time: CR, WE, RD, TB, CC, and CF. These figures not only reflect algorithm performance across different task phases but also illustrate the practical impact of multi-agent collaboration on efficiency, coverage precision, and conflict management during operations.

Figure 4 illustrates the dynamic evolution of six key indicators—CR, WE, RD, TB, CC, and CF—over task execution time for different path planning algorithms and multi-platform collaborative operations.

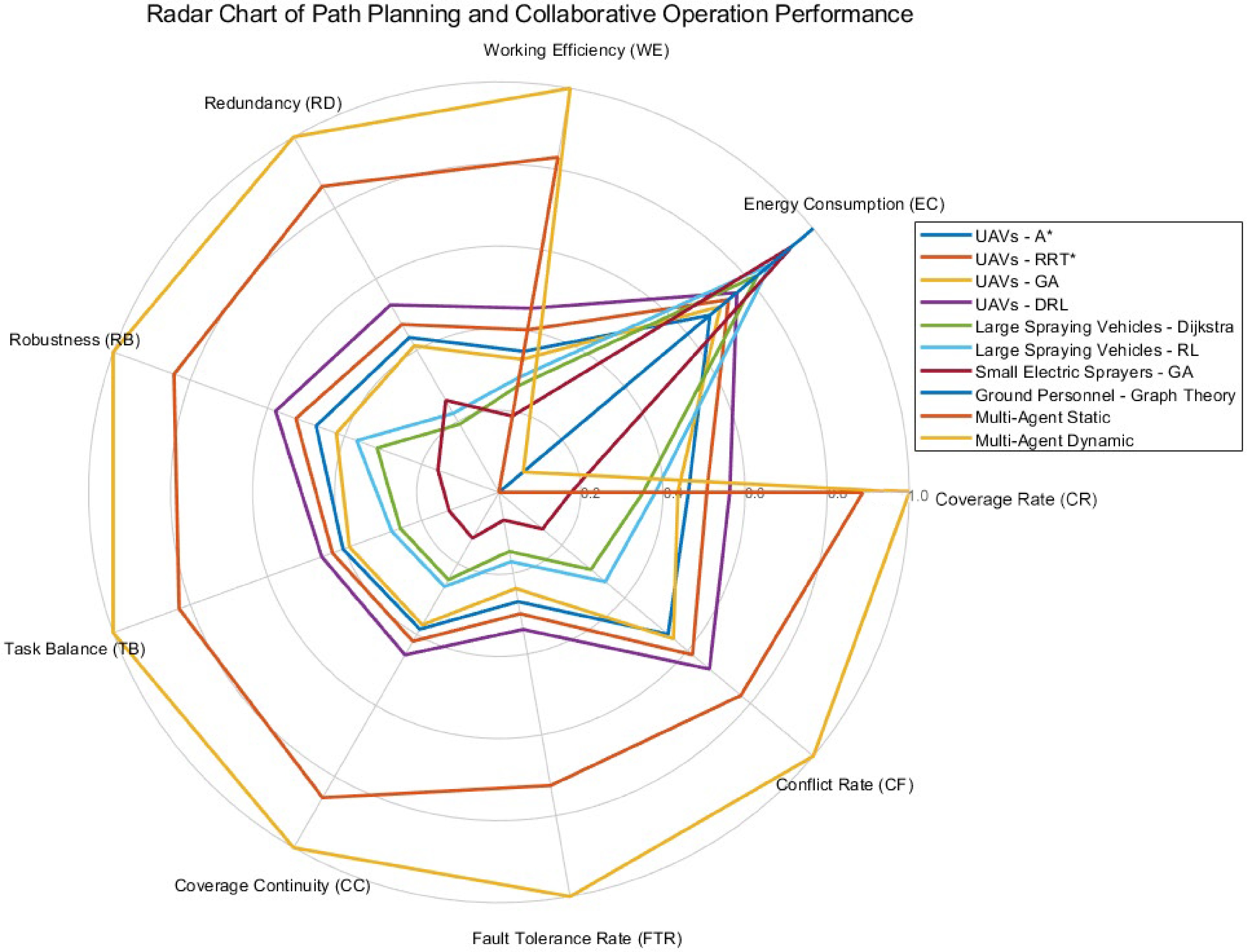

To comprehensively evaluate the performance of different path planning algorithms and collaboration strategies across multiple objectives, a radar chart was also employed to visualize each scheme's performance on key indicators: CR, EC, WT, task conflict rate (TCF), CC, and TB. The radar chart, shown in Fig. 5, allows intuitive comparison of the advantages and disadvantages of various algorithms and strategies across multiple dimensions, highlighting the role of multi-agent dynamic collaborative optimization in enhancing overall task efficiency, reducing redundancy and conflicts, and improving task continuity and balance. This provides a clear visual basis for optimizing multi-platform path planning and collaborative operations.

As observed from the figure, UAV-DRL and the multi-agent dynamic collaboration scheme exhibits the best performance in CR, TB, and CC, demonstrating that combining deep reinforcement learning with real-time state information can significantly optimize UAV path planning. Meanwhile, multi-platform collaborative strategies further enhance the overall continuity and balance of task coverage. In contrast, single-agent static schemes or traditional algorithms show noticeable deficiencies in CC and TB, with some indicators falling below those of the multi-agent dynamic scheme, indicating that single-platform or static strategies are prone to task blind spots or duplicate operations in complex environments.

Regarding EC and WT, the multi-agent dynamic collaboration achieves efficiency optimization through reasonable task allocation and coordinated path planning, avoiding redundant paths and ineffective movements. This reduces the high-energy consumption and long task durations observed in single-agent execution. Single-agent schemes, particularly those involving ground personnel or traditional algorithms, consume more energy and require longer task times, reflecting the impact of task duplication and scheduling conflicts on resource utilization. The CF further validates the effectiveness of collaborative optimization: the dynamic multi-agent scheme shows a significantly lower conflict rate than static allocation and single-agent execution, demonstrating that dynamic adjustment strategies can promptly mitigate operational conflicts among platforms, thereby improving system robustness.

Dynamic scheduling and robustness analysis

-

This section evaluates the effectiveness of dynamic scheduling strategies integrating real-time state perception, predictive models, and robustness optimization for multi-platform, collaborative operations. Key objectives include: leveraging LSTM/Transformer models to anticipate task delays or anomalies and trigger online reallocation; comparing static one-time allocation (Static) with predictive, RL-based, and integrated multi-agent dynamic strategies in terms of responsiveness, delay control, recovery, and resource utilization; and assessing the contribution of predictive and anomaly detection modules to overall robustness.

Experiments are conducted in a 47-grid urban scenario with six UAVs, two large spraying vehicles, eight small electric spraying vehicles, and 30 ground personnel. Disturbances simulate operational challenges, including random task insertions, temporary platform failures, blocked paths, and traffic congestion, with multiple repetitions for statistical significance.

Scheduling strategies are defined as follows:

Static: one-time task allocation with minimal rollback, no active adjustment.

Predictive: LSTM/Transformer forecasts short-term delays/failures (30–300 s); reallocation is triggered when risk thresholds are exceeded.

RL-Based: DQN or Actor-Critic generates reallocation actions based on current system state, guided by a reward function considering coverage, delays, energy, and conflicts.

Integrated multi-agent dynamic: combines offline Pareto solutions from improved NSGA-II with online RL and predictive priority adjustments for fine-grained task allocation.

Dynamic scheduling follows the workflow: data collection → fusion → time-series prediction → anomaly detection → scheduling module (RL or NSGA-II repair) → updated task dispatch → execution with monitoring. Predictive models are updated offline, and applied online using a sliding window to ensure accuracy.

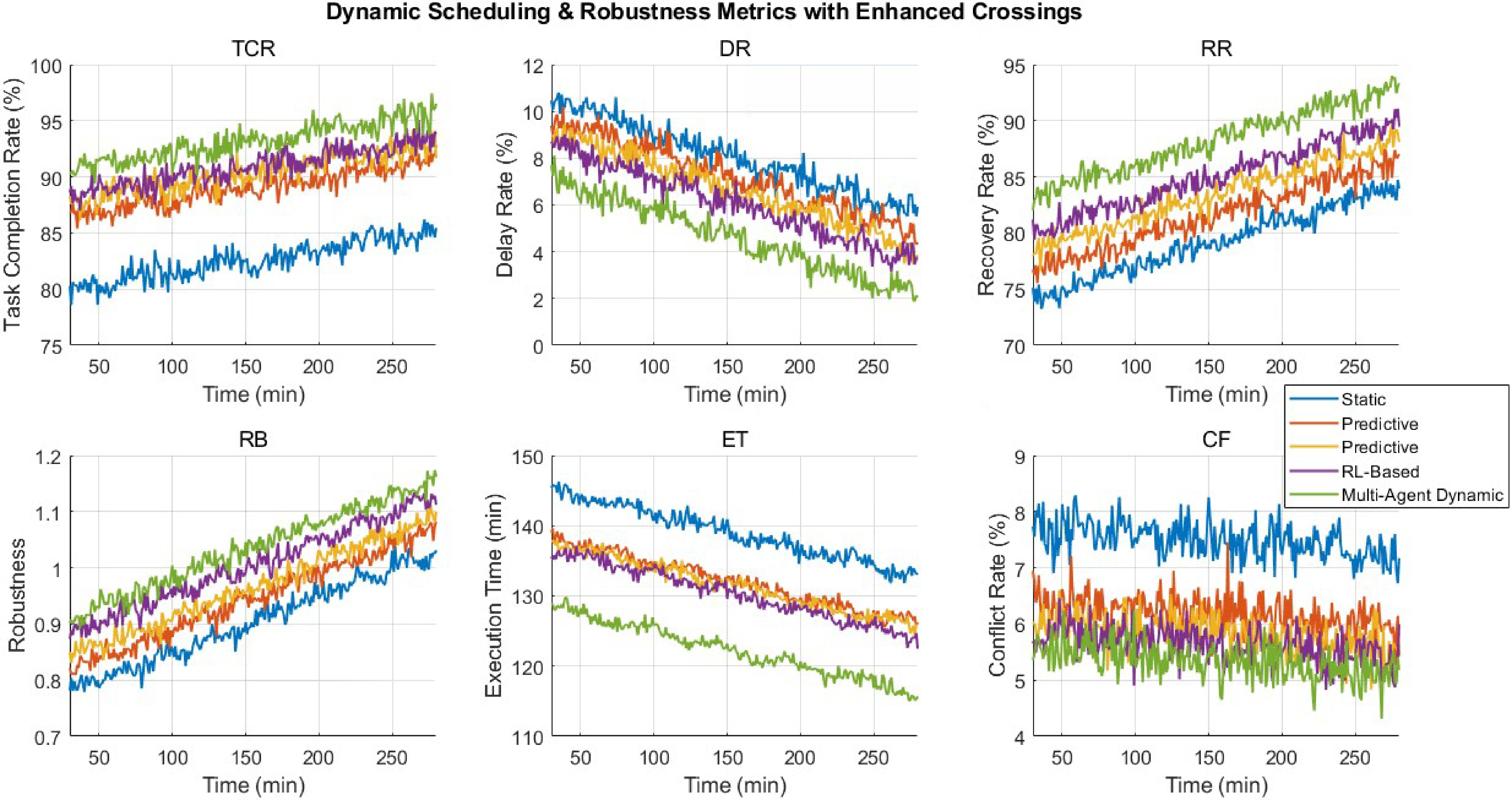

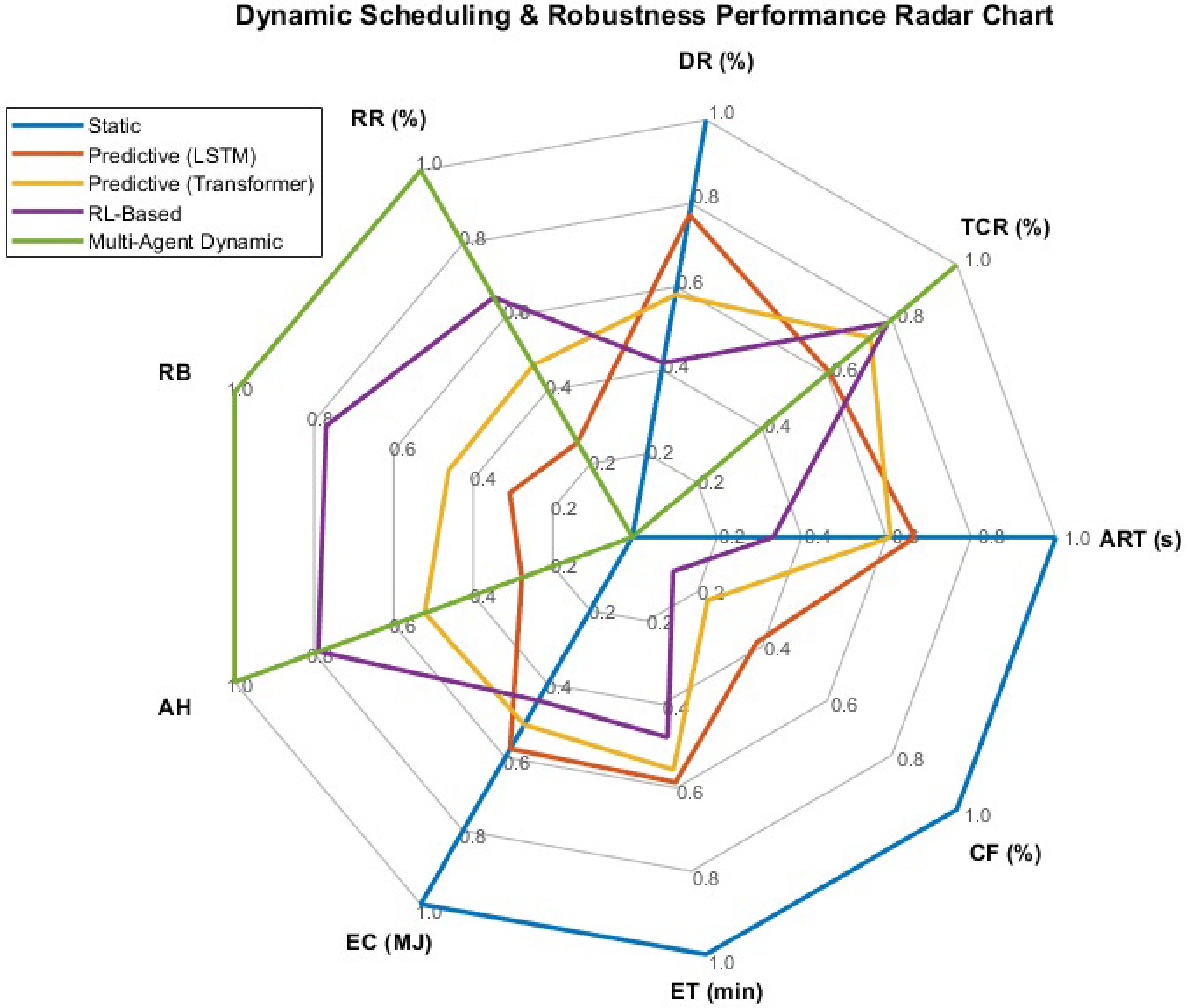

Performance is compared across strategies using metrics such as average response time (ART), task completion rate (TCR), delay rate (DR), recovery rate (RR), RB, anomaly handling, EC, total execution time, and multi-platform CF. Results are averaged over multiple trials, and Table 3 summarizes the quantitative comparison, highlighting the effects of dynamic scheduling on efficiency, resource utilization, and system robustness.

Table 3. Dynamic scheduling and robustness performance under different strategies.

Strategy ART (s) TCR (%) DR (%) RR (%) RB AH EC (MJ) ET (min) CF (%) Static 10.45 80.12 10.56 74.23 0.78 68.34 138.45 145.2 7.78 Predictive (LSTM) 9.12 86.45 9.78 76.56 0.82 71.12 134.12 138.3 6.45 Predictive (transformer) 8.89 87.78 9.12 78.45 0.84 73.56 133.45 137.8 6.12 RL-based 7.78 88.34 8.56 80.12 0.88 76.23 132.78 136.5 5.89 Multi-agent dynamic 6.45 90.56 7.12 83.23 0.91 78.34 128.23 128.5 5.62 From Table 3, it can be observed that dynamic scheduling strategies outperform static one-time allocation across multiple metrics. Static scheduling exhibits a relatively long ART and high task DR, while task RR and anomaly handling capability are limited, indicating that static allocation struggles to cope with unexpected events and localized blockages in complex operational scenarios.

The predictive dynamic scheduling strategies (Predictive-LSTM/Transformer) significantly reduce scheduling response times, improve TCR and task RR, while effectively lowering task DR and CF. Among them, the Transformer model slightly outperforms LSTM in short-term state prediction, providing more stable performance in anticipating potential task delays and anomaly risks.

The RL-based online scheduling further optimizes multi-platform resource utilization by generating dynamic scheduling actions based on the current system state. This approach not only achieves higher task completion and robustness metrics, but also further reduces task delays and conflicts.

The integrated multi-agent dynamic strategy demonstrates the best overall performance. It achieves the shortest scheduling response time, the highest task completion and recovery rates, and significantly improved anomaly handling capability. This highlights the advantage of combining offline multi-objective optimization (NSGA-II), with online RL scheduling in complex urban environments. This strategy not only enhances overall operational efficiency, but also maintains task continuity and system stability under disturbances and localized blockages. Furthermore, it performs excellently in terms of EC and execution time, fully demonstrating the critical role of dynamic scheduling and robustness optimization in multi-platform collaborative operations.

Figure 6 illustrates the dynamic changes of six key performance metrics over time for four different scheduling strategies. Overall, the static allocation strategy starts with a relatively low TCR and increases slowly; its DR is high with noticeable fluctuations, while task RR and system RB remain relatively low. Meanwhile, the CF is elevated, and execution efficiency (execution time, ET) is the poorest, indicating that static strategies struggle to cope with unexpected events or localized blockages in complex urban environments.

The predictive dynamic scheduling (Predictive-LSTM/Transformer) strategy anticipates potential task delays or anomalies, triggering online task reallocation. This significantly improves TCR and RR, while reducing DR and CF, resulting in enhanced system robustness and improved operational efficiency. The RL-Based online scheduling further optimizes decision-making actions, enhancing the system's adaptability to local blockages, traffic disruptions, and platform failures, with all six metrics outperforming those of predictive dynamic scheduling.

To comprehensively evaluate the integrated performance of different dynamic scheduling strategies in multi-platform operations, a radar chart is presented in Fig. 7, showing normalized values across multiple dimensions: average response time (ART), TCR, task DR, task RR, system RB, anomaly handling capability (AH), EC, execution efficiency (ET), and CF. This visualization allows for an intuitive comparison of the advantages and limitations of static, predictive (LSTM/Transformer), RL-based and integrated multi-agent dynamic strategies under multi-objective optimization.

From the figure, it is evident that the integrated multi-agent dynamic scheduling strategy outperforms most other strategies across the majority of metrics. Compared with static allocation, dynamic strategies significantly improve TCR and task RR, while effectively reducing task DR, and CF, demonstrating strong adaptability to unexpected events, localized blockages, and platform anomalies in complex urban environments. The incorporation of predictive models (LSTM/Transformer) allows the scheduler to anticipate potential risks and adjust task allocation proactively, further enhancing system robustness and anomaly handling capability. The RL-based online scheduling strategy excels in execution efficiency (ET) and EC control, optimizing platform resource usage while maintaining high coverage and completion rates.

Overall performance evaluation and baseline comparison

-

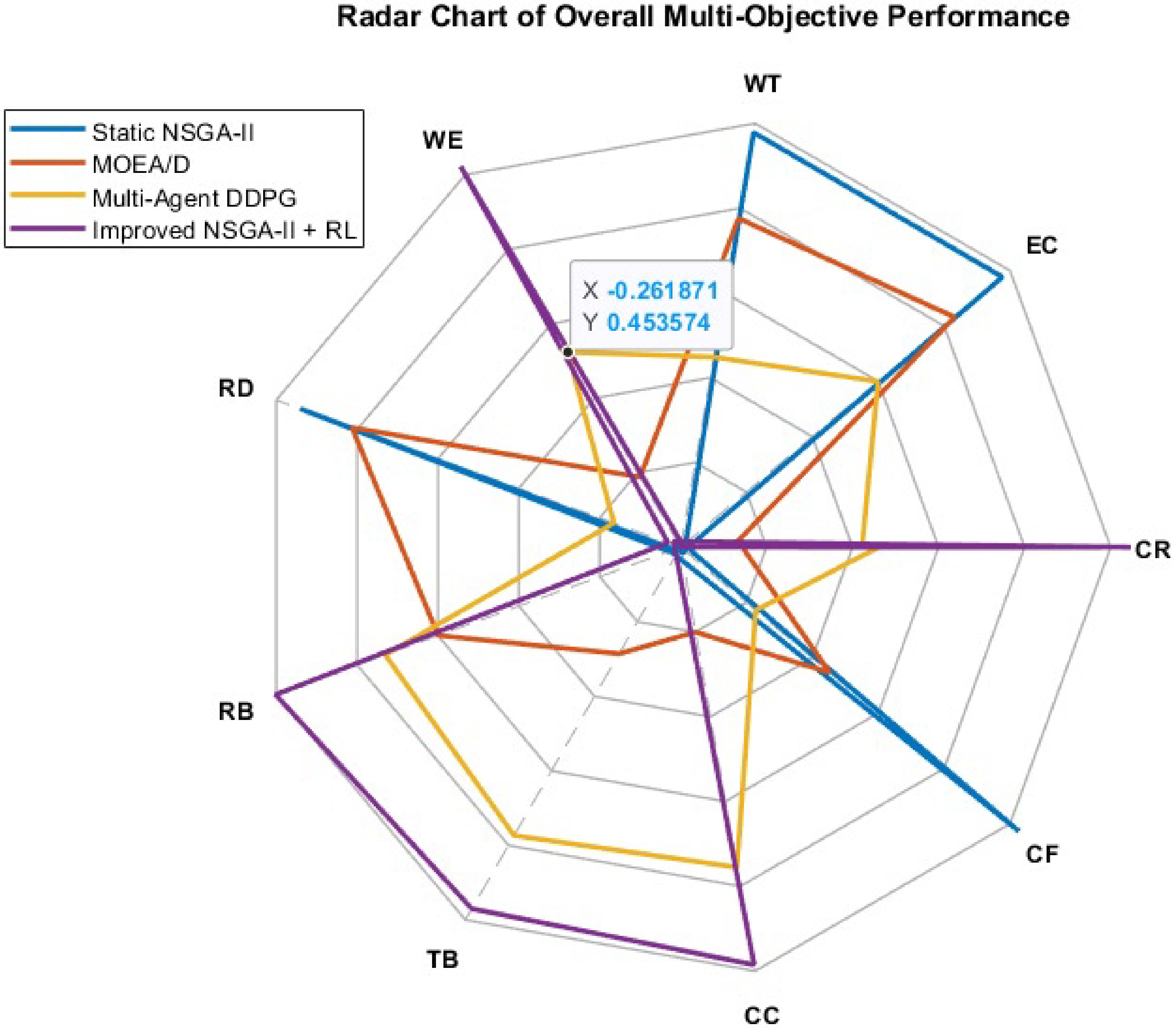

This section aims to integrate the results from the previous experimental dimensions, and provide a comprehensive evaluation of the proposed NSGA-II, combined with the Reinforcement Learning (RL) algorithm for multi-platform, multi-objective task scheduling, in comparison with three baseline methods: static NSGA-II, MOEA/D, and multi-agent pure RL (DDPG). Through a multi-dimensional metric analysis, the study verifies the proposed method's multi-objective optimization capability and system stability in complex urban environments.

In terms of experimental design, the study builds on the previously described typical task scenarios involving UAVs, ground vehicles (large spray trucks, small electric spray vehicles), and ground personnel. Each method is comprehensively evaluated across key metrics, including CR, WE, EC, CF, TB, CC, ART, and RB. Each experiment is repeated 10 times independently, and the results are averaged to ensure data stability and statistical significance.

During system operation, all methods execute under the same task assignments and disturbance conditions. The proposed NSGA-II + RL approach combines offline multi-objective Pareto solutions, with online RL adaptive scheduling, leveraging multi-agent information sharing and dynamic task reallocation to achieve overall improvements in coverage, efficiency, and task continuity. In comparison, Static NSGA-II maintains some stability in certain metrics but exhibits a slower response to unexpected tasks, local blockages, or platform failures, resulting in higher task delay rates, and conflict rates. MOEA/D performs steadily in multi-objective trade-offs, but lacks dynamic scheduling capability. Multi-agent DDPG provides online adaptive control, but suffers from suboptimal coverage balance and energy consumption management.

The experimental results are summarized in Table 4, providing a quantitative basis for evaluating the performance differences among these methods.

Table 4. Overall performance comparison of multi-agent task scheduling methods.

Method CR (%) EC (MJ) WT (min) WE (%) RD (%) RB TB (%) CC (%) FTR (%) CF (%) Static NSGA-II 84.23 142.45 145.28 72.56 15.12 0.78 68.12 70.45 74.23 8.78 MOEA/D 85.12 140.78 142.34 73.45 14.78 0.84 69.34 71.12 76.12 8.12 Multi-agent DDPG 87.45 138.34 138.55 75.23 13.45 0.85 71.45 73.12 77.45 7.89 Improved NSGA-II + RL 91.56 132.23 132.51 77.45 13.12 0.88 72.34 74.02 78.23 7.62 From Table 4, it can be seen that the NSGA-II + RL method outperforms the other baseline approaches in key metrics such as CR, WE, TB, CC and RB. At the same time, it achieves relatively low levels of EC, WT, RD, and CF, demonstrating that dynamic scheduling, combined with multi-agent collaboration can significantly enhance overall task performance.

In contrast, static NSGA-II—due to its one-time task allocation—shows higher redundancy and conflict rates, along with lower coverage efficiency and task continuity. MOEA/D maintains stability in multi-objective optimization, but lacks real-time scheduling capability, leading to slightly weaker task responsiveness and conflict control. Multi-agent DDPG offers flexible online scheduling, yet its TB, CC, and FTR remain slightly lower than the proposed method. Overall, the combination of offline improved NSGA-II and online RL for dynamic scheduling achieves the best comprehensive performance in complex urban environments, balancing efficiency, coverage precision, and robustness.

To visually present the comprehensive performance of each method, a multi-objective radar chart is provided in Fig. 8, comparing NSGA-II + RL with the three baseline schemes across metrics including coverage, EC, WT, CR, TB, CC, RB, and average response time. The radar chart clearly highlights the advantages of the proposed method in terms of coverage, task efficiency, and robustness, while also revealing the limitations of baseline methods in multi-objective trade-offs, providing an intuitive and quantitative basis for optimizing multi-platform collaborative task scheduling.

The figure illustrates the comprehensive performance of four methods across nine key performance metrics, including CR, EC, WT, WE, RD, RB, TB, CC and CF.

From the radar chart, it is clear that the Improved NSGA-II + RL method performs superiorly across most metrics, achieving the highest levels in coverage, WE, TB, and RB, while maintaining relatively low EC, WT, and CR, demonstrating its overall advantage under multi-objective optimization.

Ablation study

-

The ablation study is designed to isolate and quantify the contribution of key system components, including heterogeneous collaboration, online adaptive scheduling, and predictive coordination modules.

The ablation study is designed to explicitly validate the effectiveness of the key components corresponding to the claimed contributions of the proposed framework. Each ablation scenario removes or disables a specific module, while keeping all other experimental settings unchanged. By comparing the performance degradation under different ablation settings, the contribution of each component can be quantitatively assessed.

Agent ablation

-

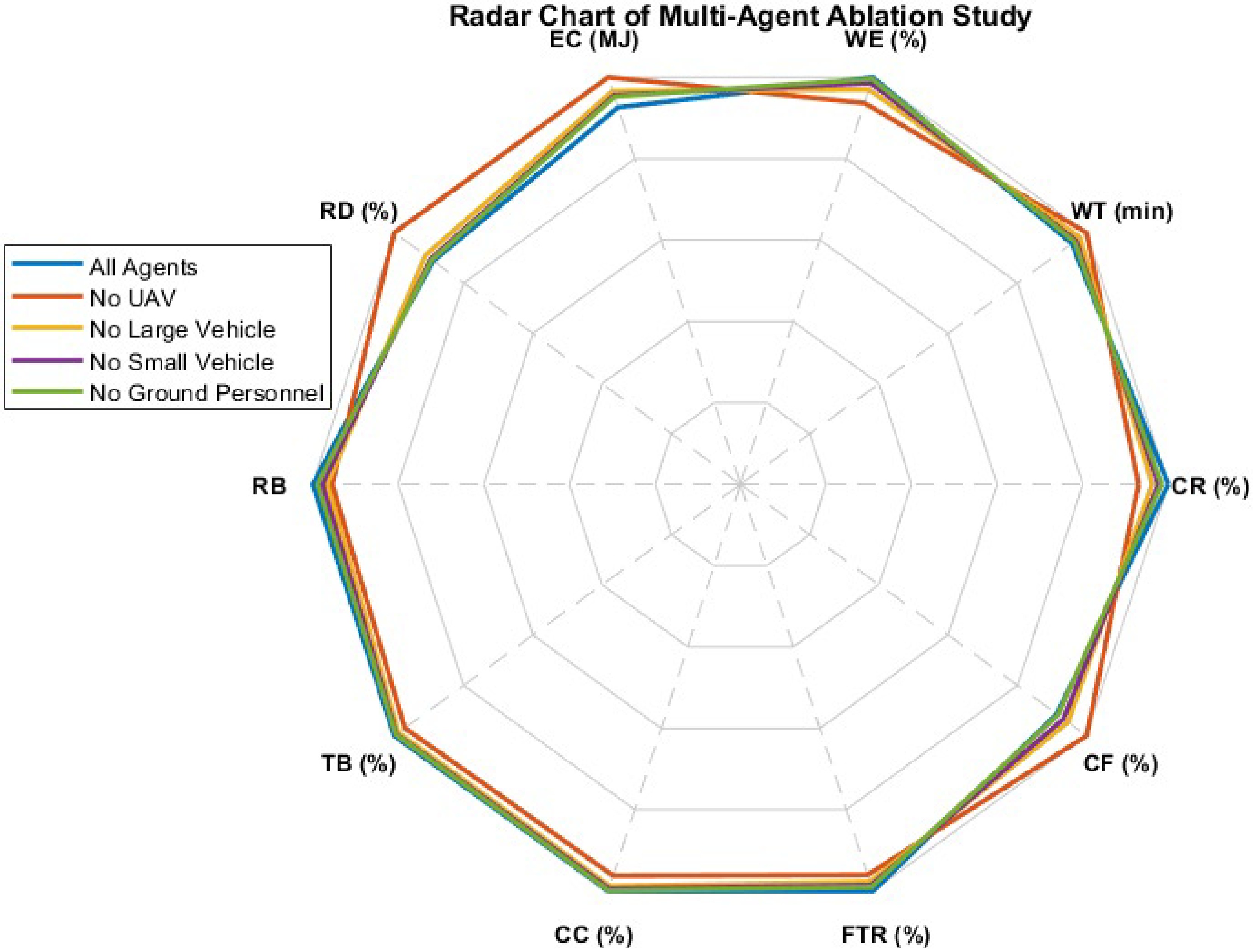

This ablation removes heterogeneous collaboration by restricting the system to a single agent type, aiming to validate the contribution of unified multi-type agent coordination.

This experiment aims to evaluate the contribution of different operational agents to multi-platform task execution, and to verify the necessity of a multi-agent collaborative design in complex environments. The study conducts ablation experiments by removing one or more operational agents, including UAVs, large spraying vehicles, small electric spraying vehicles, and ground personnel, and observes the resulting differences in system performance across key metrics. The comparative metrics include nine critical dimensions in total to quantify the actual contribution of each agent to task completion.

To clarify the contribution of each major component in the proposed framework, ablation experiments are conducted under a unified and controlled experimental setting. All ablation experiments strictly follow the same experimental configuration as the comparative experiments described earlier, including the urban environment, task distribution, agent composition, and evaluation metrics.

The baseline configuration corresponds to the complete proposed framework, which incorporates full heterogeneous collaboration, offline multi-objective task allocation, online adaptive scheduling, and predictive coordination modules. For each ablation scenario, only the targeted module is removed or disabled, while all other components and parameter settings remain unchanged.

To ensure fairness and reproducibility, all ablation scenarios share identical initial conditions, environmental layouts, and operational constraints. Each scenario is executed multiple times, and the reported results represent average performance values.

Three ablation scenarios are designed to isolate the impact of key system components:

(1) Without online adaptive scheduling, where task reallocation during execution is disabled and only the initial offline plan is used;

(2) Without heterogeneity modeling, where agent-specific constraints and capabilities are ignored, and tasks are allocated uniformly;

(3) Without robustness-oriented coordination, where predictive feedback and disturbance handling mechanisms are removed.

All other system components remain unchanged to ensure that observed performance variations can be attributed to the removed module.

Table 5 presents the impact of each agent's absence on these nine key metrics under different ablation conditions. The comparison clearly illustrates the specific contributions of each agent to coverage, working efficiency, redundancy reduction, task balance, and overall system robustness.

Table 5. Key performance metrics under different agent ablation conditions.

Ablation scenario CR (%) WT (min) WE (%) EC (MJ) RD (%) TB (%) CC (%) FTR (%) CF (%) No UAV 72.45 158.23 65.12 135.45 18.34 63.12 60.45 68.12 11.23 No large spraying vehicle 81.12 148.56 70.45 138.23 16.78 66.34 64.12 71.45 9.78 No small electric sprayers 83.45 145.78 72.34 136.78 15.45 68.23 66.12 73.12 8.89 No ground personnel 85.23 143.56 73.78 135.12 14.78 69.12 68.45 74.23 8.12 All agents present 91.56 132.51 77.45 132.23 13.12 72.34 74.02 78.23 7.62 From Table 5, it can be observed that the absence of UAVs leads to a significant decline in CR and WE, while redundancy and conflict rates increase notably, indicating that UAVs play a core role in rapid coverage and task completion. The absence of ground large spraying vehicles or small electric vehicles results in slight decreases in CC and TB, reflecting the role of ground vehicles in complementing aerial coverage blind spots, and optimizing overall task paths. The absence of ground personnel has a pronounced impact on fault tolerance and the handling of abnormal tasks, highlighting that ground personnel are irreplaceable in task recovery, and responding to local unexpected events in complex scenarios.

To visually present the impact of each agent on system performance, a radar chart is used to illustrate the variations of key metrics under different ablation combinations, accompanied by a table listing the specific values for each experimental condition. The radar chart clearly reflects the contribution of each agent in coverage, efficiency, and robustness, while also highlighting the advantages of multi-agent collaboration in ensuring task completion, reducing conflicts, and improving system stability, providing a quantitative basis for optimizing multi-platform operations.

Figure 9 depicts the impact of each operational agent on the nine key system metrics under different ablation combinations. The radar chart allows for an intuitive comparison of system performance trends when specific agents are removed, against the full collaborative scenario with all agents present.

The figure illustrates the performance of multi-agent task execution across nine key metrics under different ablation combinations. Overall, the full multi-agent collaboration (all agents) demonstrates the best performance in CR, WE, TB, CC, and FTR, indicating that the synergy of UAVs, ground vehicles, and ground personnel effectively enhances overall system performance and robustness.

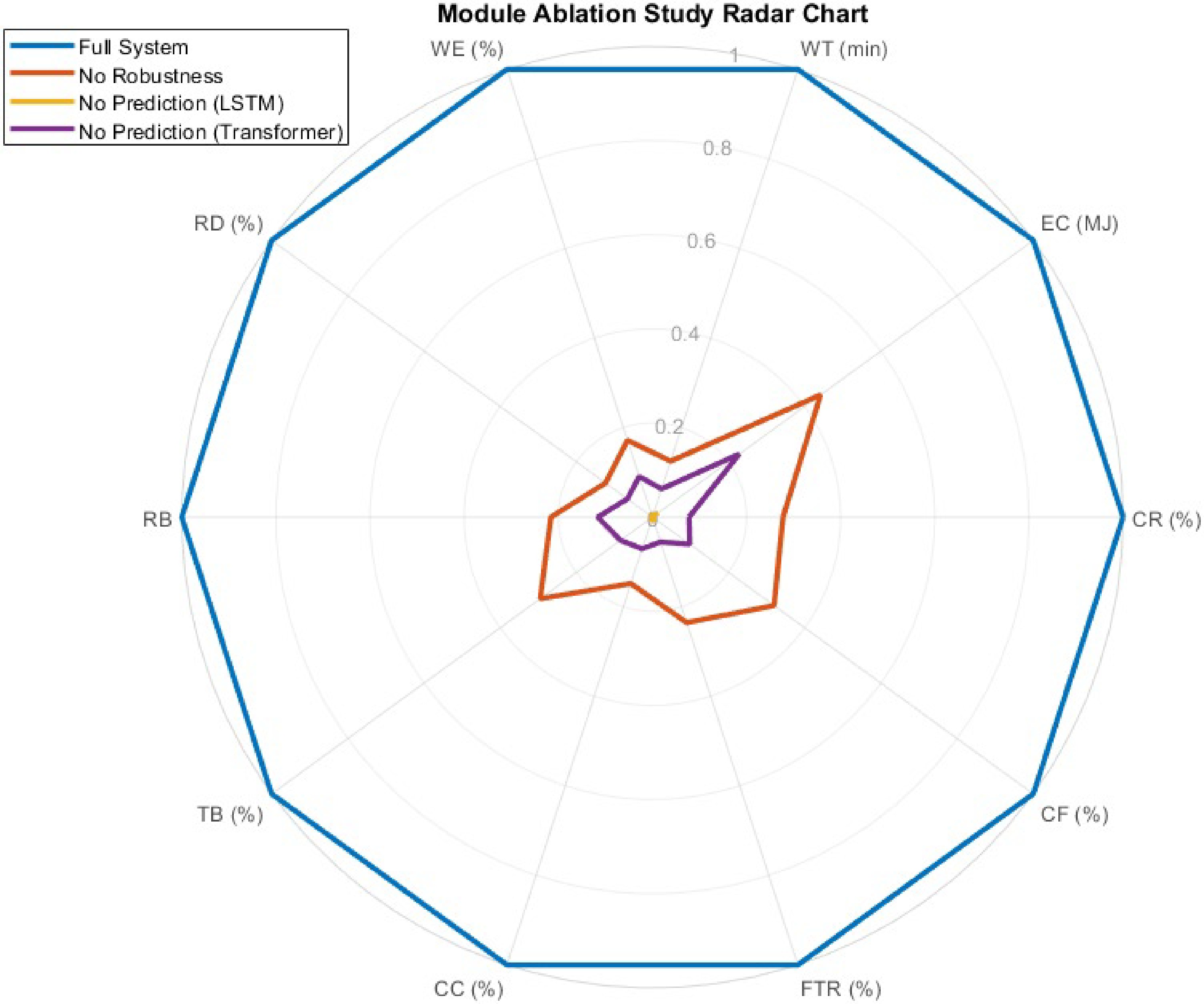

Module ablation

-

This ablation removes the predictive coordination component, aiming to examine its effectiveness in improving robustness against task uncertainty and agent disturbances.

In terms of experimental design, the robustness module or the prediction module is removed to observe the differences in system performance under dynamic task scheduling, anomaly handling, and response to unexpected events. Radar charts are used to visually illustrate the changes in each metric across different ablation combinations, while tables provide the exact numerical results for each experimental condition, enabling quantitative analysis of module contributions. Table 6 presents the experimental results for the full system and the ablation scenarios across nine key performance indicators.

Table 6. Module ablation experiment results under different functional configurations.

Module / scenario CR (%) EC (MJ) WT (min) WE (%) RD (%) RB TB (%) CC (%) FTR (%) CF (%) Full system 91.56 132.23 132.51 77.45 13.12 0.88 72.34 74.02 78.23 7.62 No robustness module 88.34 134.12 137.45 74.12 14.78 0.81 69.45 70.12 73.45 9.12 No prediction module (LSTM) 87.12 135.56 138.23 73.45 15.01 0.79 68.23 69.45 72.12 9.78 No prediction module (transformer) 87.45 134.78 137.89 73.78 14.89 0.80 68.56 69.78 72.45 9.56 From the table, it can be observed that removing the robustness module or the prediction module leads to varying degrees of decline in system performance across CR, WE, TB, and CC. Notably, the absence of the prediction module has a particularly significant impact on task delay rate (DR) and CF, resulting in increased RD and reduced FTR. In contrast, the complete system maintains high levels across all nine performance indicators, demonstrating that the synergistic operation of functional modules effectively enhances task completion efficiency and overall system stability.

Figure 10 presents the radar chart from the functional module ablation experiments, comparing the complete system with configurations missing either the robustness module or the prediction module (LSTM/Transformer) across nine key performance indicators. It can be observed that the full system achieves the highest levels in CR, WE, TB, CC, and RB, demonstrating the significant performance enhancement provided by multi-module collaboration in multi-platform task execution.

The ablation results demonstrate that removing any individual component leads to noticeable performance degradation. In particular, disabling online adaptive scheduling increases task completion time under dynamic conditions, while ignoring agent heterogeneity results in severe workload imbalance. The absence of robustness-oriented coordination further amplifies sensitivity to disturbances, confirming that the proposed framework benefits from the synergistic integration of all components rather than isolated algorithmic effects. These results suggest that removing the predictive coordination module leads to noticeable performance degradation under dynamic task conditions, confirming its role in improving system robustness. These results demonstrate that predictive coordination significantly enhances system robustness, particularly in scenarios with frequent task updates.

-

This study addresses challenges in urban public health disinfection and multi-agent collaborative operations, including low operational efficiency, uneven coverage, high energy consumption, and limited scheduling robustness. The proposed intelligent task allocation and path planning framework enables multi-level, multi-type agent collaboration. By constructing a multi-objective task allocation model targeting operational efficiency, EC, TB, CC, and system robustness, the framework achieves global optimization and dynamic scheduling of multi-agent tasks.

The dynamic path planning algorithm is tailored to different agent types (UAVs, large spraying vehicles, small electric sprayers, and ground personnel) and incorporates conflict detection, obstacle avoidance, and task priority adjustment mechanisms, ensuring safe, continuous, and optimal coverage. A real-time monitoring and anomaly detection mechanism allows dynamic responses to emergent events and environmental changes. Experimental results demonstrate that the proposed approach outperforms traditional centralized and static scheduling methods in operational efficiency, coverage, energy optimization, task completion time, and scheduling robustness. Multi-objective optimization balances efficiency and resource consumption, while TB and CC metrics ensure uniform and continuous operations. Anomaly detection further enhances adaptability to unexpected tasks. Validation across different scenarios and task scales shows high scalability and generality, offering a practical technical solution for urban disinfection, multi-agent collaborative operations, and intelligent inspection tasks.

Despite these promising results, several directions for improvement remain. In complex urban environments, agents face challenges such as multi-level buildings, dynamic weather conditions, traffic constraints, and moving obstacles. Future work will focus on enhancing adaptability under such conditions through multi-level task allocation, dynamic path planning optimization, and adaptive adjustment of multi-objective weights. Integrating reinforcement learning, deep multi-agent systems, and graph neural networks could further improve collaborative decision-making, operational efficiency, resource utilization, and task completion quality.

Additionally, leveraging multi-source sensor data, historical task data, and environmental information can support more precise anomaly prediction and dynamic task prioritization models, enabling rapid responses to emergent tasks and environmental changes. Energy consumption models and charging scheduling strategies for different agent types will be developed to optimize energy use and ensure sustainable operations.

-

The proposed framework provides an effective and scalable solution for multi-agent collaborative operations in urban environments. It achieves significant improvements in operational efficiency, coverage continuity, energy optimization, task completion, and system robustness, compared with traditional centralized and static methods. The integration of multi-objective optimization, dynamic path planning, and anomaly detection ensures safe, continuous, and adaptive task execution.

Future research will focus on enhancing adaptability to complex urban conditions, integrating advanced AI techniques for higher-level decision-making, and optimizing energy management for sustainable operations. The framework has potential applications beyond urban disinfection, including urban inspection, environmental monitoring, emergency response, and intelligent logistics. With these developments, multi-agent collaborative systems can achieve higher intelligence, efficiency, and robustness, providing strong technical support for smart city development and emergency management.

This research was financially supported by the Medical Research Project of Foshan (Grant No. 20240170),Guangdong Provincial Key Laboratory of Intelligent Port Security Inspection (Grant No. 2023B1212010011); Foshan Self-Funded Science and Technology Innovation Projects (Grant No. 2320001007544, 2320001007511); Guangzhou Nansha District Innovation Team Project (Grant No. 2021020TD001), and Guangzhou Key Research and Development Program (Grant No. 2024B01W0002, 2025B01J4003).

-

This study did not involve human participants, animals, or clinical data. Ethical approval was therefore not required.

-

The authors confirm their contributions to the paper as follows: conceptualization, writing − original draft preparation: Zhang L; methodology, visualization: Zhang N; software: Yang J; validation: Li Z; data curation: Zhang L, Wu Y; writing − review and editing: Li Z, Wu Y. All authors reviewed the results and approved the final version of the manuscript.

-

The datasets generated and analyzed during the current study are owned and managed by the local government authorities. Due to data sensitivity and confidentiality agreements, the datasets are not publicly available.

-

Although author Liuhua Zhang is an employee of Guangzhou Ceprei Certification Center Services Limited, the work presented herein constitutes independent academic research, and is not related to the commercial interests of the company. The authors declare that no financial or other contractual agreements between the company and the authors or their institutions influenced the study design, results, interpretation, or reporting of this work.

- This article is an open access article distributed under Creative Commons Attribution License (CC BY 4.0), visit https://creativecommons.org/licenses/by/4.0/.

-

About this article

Cite this article

Zhang L, Li Z, Zhang N, Yang J, Wu Y. 2026. An intelligent task allocation and path planning framework for multi-level and multi-type air–ground–human collaboration in public health disinfection. International Journal of Micro Air Vehicles 18: e002 doi: 10.48130/mav-0026-0002

An intelligent task allocation and path planning framework for multi-level and multi-type air–ground–human collaboration in public health disinfection

- Received: 05 November 2025

- Revised: 08 January 2026

- Accepted: 03 February 2026

- Published online: 29 April 2026

Abstract: With the increasing demands of urban public health management and emergency epidemic control, traditional single-agent disinfection operations are insufficient to achieve efficient, comprehensive, and sustainable coverage, suffering from limited coverage, low operational efficiency, high energy consumption, and poor scheduling robustness. To address these challenges, this paper proposes an intelligent task allocation and path planning framework for multi-level, multi-type agent collaboration, integrating UAVs, large spraying vehicles, small electric sprayers, and ground personnel. Specifically, a multi-objective optimization task allocation model is developed, targeting operational efficiency, energy consumption, and system robustness, while considering task balance, coverage continuity, and fault tolerance. A collaborative path planning strategy is proposed, dynamically generating optimal coverage paths according to agent characteristics, with conflict detection and adjustment mechanisms ensuring safety and continuity. Furthermore, dynamic scheduling and anomaly prediction modules monitor execution status and enable task reassignment, enhancing system adaptability and reliability. Evaluation on real-world datasets and large-scale simulations demonstrate that the proposed framework outperforms traditional methods in multi-agent collaboration efficiency, coverage, task completion time, and energy consumption, effectively addressing complex operational scenarios. This study provides an efficient, intelligent, and scalable solution for urban public health disinfection, and offers theoretical and methodological insights for emergency management, urban inspection, and multi-agent task planning.